Douglas Seo

서경덕 - Co-Founder and Engineer of Azetta AI

My grandfather learned English in the soup kitchens of the war that reduced his childhood home to ash and rubble. His work in city government built the Korea we know today. My father learned English through RF textbooks, then globalized Silicon Valley MEMS sensors to Asia. He left on a cattle drawn carriage, and returned with a Tesla. I stand on the shoulders of giants, and with the selfless love of my mother and grandmother learned English in Kindergarten in sunny Southern California. I dreamt to be an inventor and build a sustainable, better future for all. Berkeley sharpened the tools; founding and shipping did the rest. I built some cool stuff, but I'm most proud of popper, an app that pays you for hanging out with friends, and makes some money for small businesses along the way—10k downloads, 50+ businesses, $20k monthly revenue generated for our client businesses, and an extremely effective system that handled 300k read/writes with sub-400ms load times, proof that the inventor dream can ship.

Now, I am building with Taha Bouhsine. Curiosity pulls me toward the frontier of AI research; competition drives me to pioneer the technical landscape of modern history. Fluent in English, Korean, Python, Spanish, & JavaScript. Learning Japanese, LaTex, C++, and Machine Learning. When I'm not building, you can find me playing with a ball or facetiming my gf. I'm always open to discussing new ideas and potential collaborations. I nerd out over physics, math, spirituality, philanthropy, and hacking the money system.

Experience

Founding Engineer (Employee #1 and Technical Lead) at Werkflow

Founding Engineer (Employee #1 and Technical Lead) at Werkflow Founder & CEO at Popper

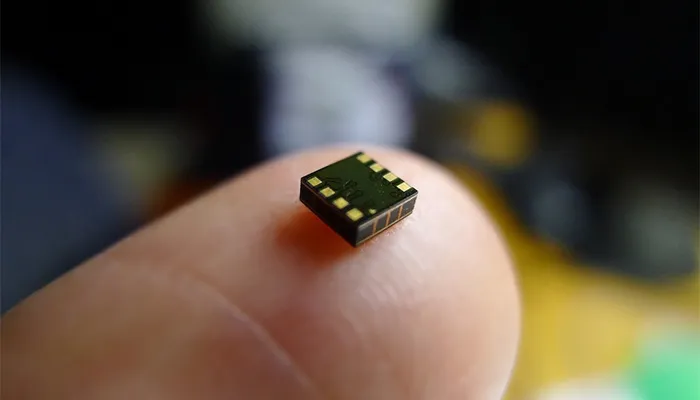

Founder & CEO at Popper Software Engineering Intern at Chirp Microsystems (TDK)

Software Engineering Intern at Chirp Microsystems (TDK)Education

B.S. in Electrical Engineering and Computer Science (EECS)

B.S. in Electrical Engineering and Computer Science (EECS)Résumé/CV

The Full Orchestra

Pipeline Parallelism, Context Parallelism, Expert Parallelism — the remaining three dimensions of distributed training, how all five compose, and the art of finding the right configuration.

Splitting the Work

From replicating models across GPUs to sharding every byte of memory — Data Parallelism, ZeRO, Tensor Parallelism, and Sequence Parallelism explained from first principles.

Infrastructure: The Unsung Hero

GPUs, memory hierarchies, NVLink, and PCIe — why the hardware layer underneath your training run shapes everything, and how to stop treating it as a black box.